An In-Depth Look at AI Agents for Large Language Models

I'm preparing to continue learning the TinyAgent project and integrate it with my previous project TinyCodeRAG. Moving forward, I plan to focus on building a code knowledge base project suitable for simple personal deployment, so I've renamed the repository to TinyCodeBase(https://github.com/codemilestones/TinyCodeBase). Welcome to give it a star and stay tuned.

Before starting to build Agent capabilities, let's first discuss what an Agent is.

What is an Agent: From Traditional LLMs to Autonomous Agents

AI Agents are defined as autonomous software entities that execute goal-oriented tasks within constrained digital environments. They perceive structured or unstructured input, reason about contextual information, and take actions to achieve specific goals. Unlike traditional automation scripts, AI Agents demonstrate reactive intelligence and limited adaptability, able to adjust outputs based on dynamic inputs[1].

"Traditional" large language models have some limitations, such as:

- Poor capability in executing continuous and dynamic tasks

- Lack of tool-calling abilities, unable to interact with external environments

- Lack of control over long-term and short-term memory, unable to maintain focus on the same task over time

AI Agents emerged to address these issues, supplementing these deficiencies through memory capabilities, reasoning abilities, and tool usage capabilities.

Function Calling: The Foundation of Agent Action

Function Calling is a mechanism that allows large language models (such as GPT, Claude, etc.) to call external functions or services through structured instructions (like JSON). Its core logic is: the model doesn't directly execute functions but generates function names and parameters, the program parses and executes the actual functions after parsing and returns results, and finally the model integrates them to generate natural language responses[2].

The key point of Function Calling is to establish prompts in advance, telling the large model how to call methods. Here's a simple example.

Answer the following question to the best of your ability. You can use the following tools:

google_search: Call this tool to interact with the Google Search API. What does the Google Search API do? Google Search is a general-purpose search engine that can be used to access the internet, query encyclopedic knowledge, stay updated on current events, etc.

parameters: [

{

'name': 'search_query',

'description': 'Search keyword or phrase',

'required': True,

'schema': {'type': 'string'},

}

],

Please format parameters as JSON objects.

code_check: Call this tool to interact with the Code Check API. What does the Code Check API do? Code Check is a code checking tool that can be used to check code for errors and issues.

parameters: [

{

'name': 'language',

'description': 'Full language name',

'required': True,

'schema': {'type': 'string'},

},

{

'name': 'source_code',

'description': 'Source code',

'required': True,

'schema': {'type': 'string'},

}

]

Please format parameters as JSON objects.

Start!

You can see that the prompt first tells the large model it has two tools available to call: google_search and code_check, and explains their tool purposes.

Then it tells the large model the parameter requirements for each tool call.

The large model will automatically start calling tools during subsequent inference—this ability is not innate. Today's mainstream large models have all undergone specialized fine-tuning on function calling datasets and are quite familiar with this prompting pattern, which is why they can achieve such smooth tool calling.

If you're using an older version of a large model, tool calling effectiveness might be significantly diminished.

Function Calling is the core driving force of AI Agent capabilities, enabling AI to autonomously trigger external tool intervention, whether handling memory issues or enhancing tool usage capabilities.

This also saves us from manually writing the LLM inference process, transforming it into a purer LLM-autonomous drive.

In the next article, we'll continue with hands-on practice of the TinyAgent project, specifically implementing two Function Calling tools: a code check tool (code_check) and a code retrieval tool (code_search).

CoT (Chain of Thought): Agent's Reasoning Engine

Chain of Thought (CoT) is a technique that explicitly guides large language models through step-by-step reasoning to improve complex task processing capabilities. It enhances model reasoning by having the model generate intermediate steps during the reasoning process, then produce final answers based on these intermediate steps. CoT is typically implemented by having the model generate intermediate steps during reasoning, then generating the final answer based on these intermediate steps.

CoT is not an architectural improvement to the model itself, but a prompt engineering strategy. Its typical prompt is as follows:

For solving math problems, use the following prompt:

Please think through the following steps:

1. Clarify the core of the problem;

2. Break down key variables;

3. Derive logic step by step;

4. Synthesize conclusions.

For a translation problem, use the following prompt:

Please translate the following English to Chinese in three steps:

1. Literal translation (preserve original meaning);

2. Reflect on language habits (adjust sentence structure);

3. Optimize expression (concise and elegant).

English: {text}

You can also simply add "please think step by step" to your prompt to increase the large model's reasoning length.

Similarly, currently available large models have been extensively trained on CoT scenarios and respond quite effectively to such instructions.

What is ReAct

ReAct is also a prompt framework based on large language models. This concept was first proposed in this paper[3], with the core idea of combining reasoning results and execution results to achieve more complex tasks.

The ReAct framework prompt is as follows:

Please use the following format:

Question: The question you must answer

Thought: You should always think about what to do

Action: The action you should take, should be one of: [{tool_names}]

Action Input: Input for the action

Observation: Result of the action

... (this Thought/Action/Action Input/Observation can be repeated zero or more times)

Thought: I now know the final answer

Final Answer: The final answer to the original input question

Start!

This set of prompts effectively guides the large model to achieve dynamic fusion of thinking and tool calling. The model not only performs logical deduction before calling tools but also actively digests tool-returned results, finally integrating all information to reach reliable conclusions.

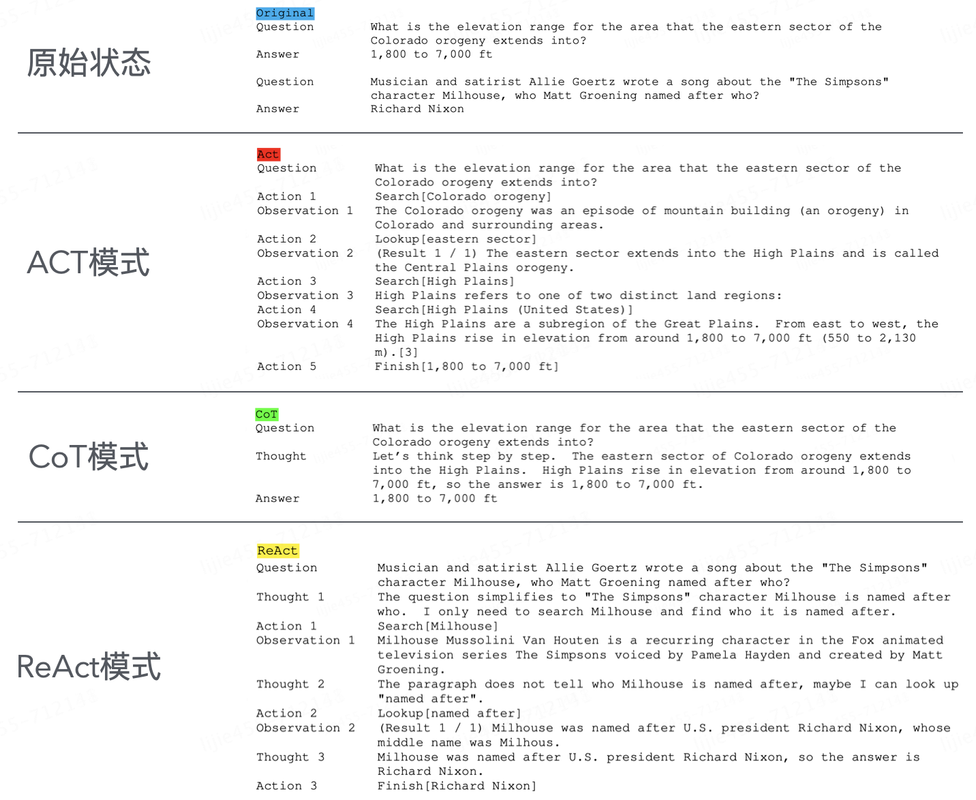

Output comparison of traditional LLM, function calling, CoT, and ReAct

The effectiveness of these prompt engineering techniques can be compared in the diagram above. In the next article, we'll implement AI Agent capabilities for TinyCodeBase following the ReAct structure.

Summary

Today we analyzed the essence of Agents from a theoretical level and clarified the differences between several key concepts: function calling, CoT, and ReAct.

Superficially, simply adding "deep thinking" requirements to prompts can significantly enhance large language model capabilities.

But what truly makes the decisive difference is the model's specialized training (SFT fine-tuning) for these prompts as a foundation. Without corresponding training, model response effectiveness drops dramatically.

In the next article, we'll add AI Agent capabilities to the TinyCodeBase project (https://github.com/codemilestones/TinyCodeBase) based on the ReAct framework. Stay tuned for future progress!

References

[1] Goodbye AI Agents, Hello Agentic AI! (https://mp.weixin.qq.com/s/5_pjJLo5zDCwygcgM4A6xQ)

[2] Function Calling: Basics of AI Models Calling External Functions (https://www.toutiao.com/article/7485861716417315354/)

[3] ReAct: Synergizing Reasoning and Acting in Language Models (https://arxiv.org/abs/2210.03629)