The 30-Minute Boundary of AI Workflows

Today I spent the entire day messing around with AI workflows—BMAD, ralph-loop, planning-files, helloAgent, taking turns one after another. The result? Still stuck.

Just as I was questioning my life choices, I came across an article from Metr.org that woke me right up.

(https://metr.org/blog/2025-03-19-measuring-ai-ability-to-complete-long-tasks/)

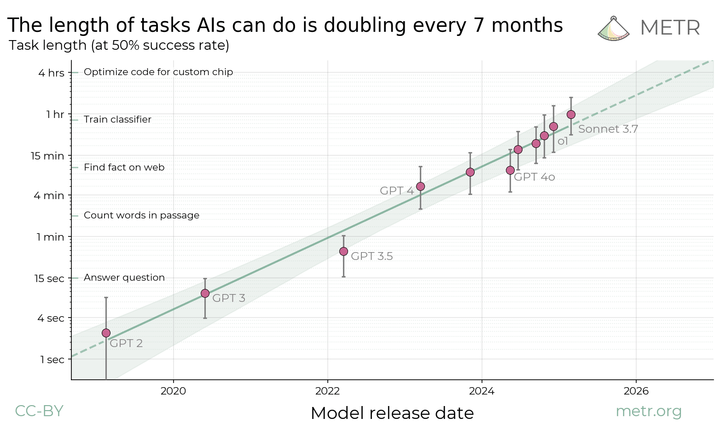

The article mainly discusses the rapid development of AI, with processing capability doubling every seven months. But what I care more about is: how strong is AI's capability right now?

There's a piece of data in the article that surprised me: the current top models, like GPT-5.2, Opus 4.5, can handle tasks with 50% success rates for up to 2.5 hours. But there's a problem with this data—it's talking about half success rate.

What really matters is the 80% success rate, and that number is only 30 minutes.

Honestly, this data is quite sobering.

You ask if current models are strong? Definitely. But what does 30 minutes mean? It's work that a human sitting there for half an hour could finish—AI only has an 80% chance of handling that. If you're using domestic models like Zhipu, the success rate might be even lower.

This suddenly made me realize something: before building workflows, you need to first weigh how heavy the task is.

If the task exceeds half an hour, thinking you can automate it with scripts? Doubtful.

You can use the file system as an intermediary, you can have infinite context, but once task complexity exceeds half an hour of human work, the failure rate shoots up.

Especially with multi-Agent systems, it's even more of a disaster zone.

How to Improve Agent's Ability to Handle Complex Problems?

Actually, everyone is currently working hard on this, hoping AI can handle more and more difficult tasks.

One approach is to improve LLM performance. At the current doubling rate every 7 months, by 2027 AI's 80% success rate could extend to 2 hours, by 2028 to 8 hours—basically equivalent to one human workday. By 2029 it could reach 32 hours, about a week's work. Then it would be solid.

Another approach is task decomposition, breaking big tasks into small chunks under half an hour each. Sounds reasonable, right?

But here's the problem. Let's do a simple math problem: if you break a 1.5-hour task into three serialized subtasks, each with 80% success rate, the overall success rate is 0.8 × 0.8 × 0.8 = 0.512. The original 50% success rate time was already 2.5 hours—you're worse off than not breaking it down!

So the most reliable strategy right now? Use the strongest model to chew on the hardest bones.

If you really want to break it down, fine, but don't expect full automation. Task decomposition has two prerequisites: first, tasks must be parallelizable; second, each step must be verifiable. Otherwise, breaking it down is pointless.

In the end, for work over 2 hours, humans are still more reliable for now. But this situation is changing fast—could be overturned any day.

Speaking of which, I recall previously writing an article about AI coding workflows. At the time, I spent a lot of energy building an automated process with 5-step loops and two-layer judgments—it looked perfect.

But seeing this data, I suddenly realized a problem I hadn't figured out before: AI automation is not a cure-all, it has boundaries.

Where's the boundary? 30 minutes.

Beyond that time, Agents become less reliable than humans.

But this doesn't mean AI is useless. Quite the opposite—its value lies in helping you handle those repetitive, mechanical, under-30-minute small tasks. Truly complex tasks still need human judgment.

Some things AI does well, some things require humans.

What's key? You need to know when to let AI take over, and when to do it yourself.

This is why I wrote this today—not because AI isn't capable, but because I'm clearer now on where its boundaries lie.